Manifest Weekly #07

More local model support, and routing is now more customizable.

What we shipped this week

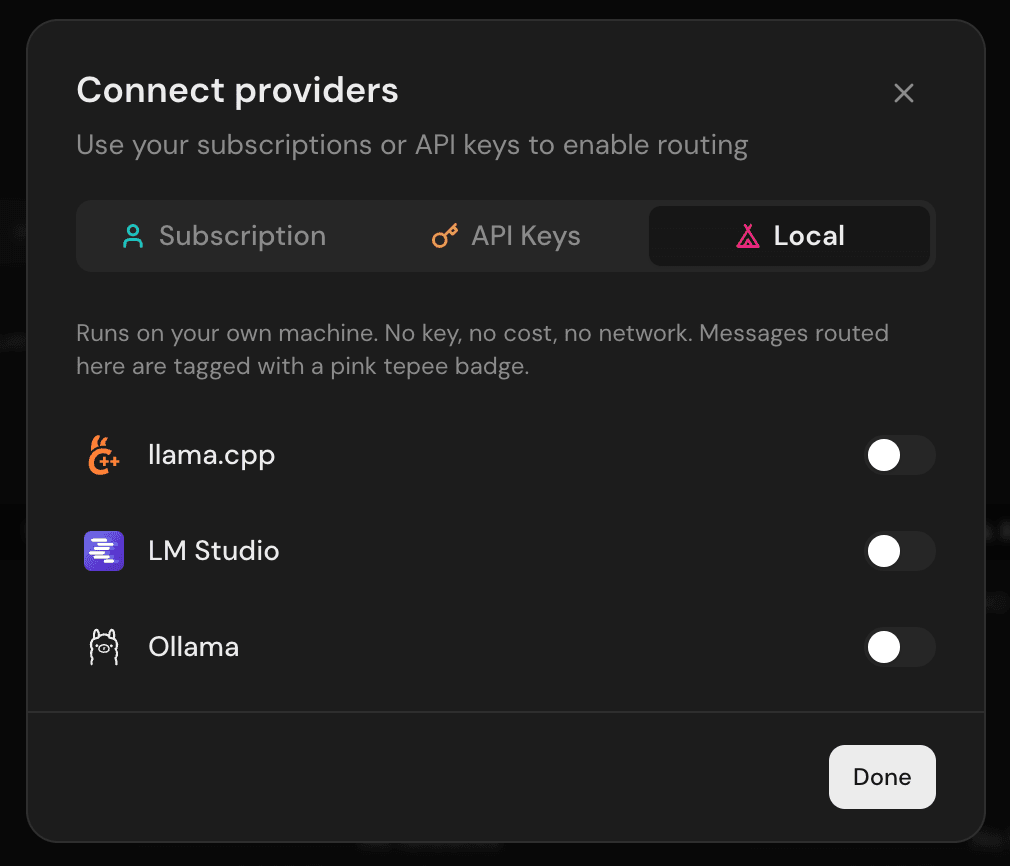

LM Studio and llama.cpp are built-in providers Self-hosted Manifest now detects LM Studio and llama.cpp running on your machine. With Ollama already supported, that covers the three local runtimes most people actually use. Messages routed locally now show a house badge, so you always know what stayed on your machine.

Custom routing by header

You can now define custom routing tiers triggered by a header. Send x-manifest-tier: premium (or whatever you name your tier) and Manifest routes straight to that tier’s model. Useful when your agent runs the same type of task frequently and you want full control over which model handles it.

OpenAI Responses API

Manifest now proxies /v1/responses. Models like gpt-4.1-codex, o4-mini-deep-research, and o1-pro only work through that endpoint, so they were unreachable before.

Duplicate your agent

- You can now duplicate an agent with all its providers, tiers, and overrides in one click.

Fixes

- Deleted custom providers kept intercepting every request after removal (#1629)

- Dashboard was blank when accessed from another device on the LAN (#1633)

- Cost column showed “—” for messages routed through custom providers (#1690)

- Custom providers now support Anthropic-compatible endpoints alongside OpenAI (#1694)

Community contributions

Thanks to @guillaumegay13 for building the Responses API endpoint.

What’s coming next

- Routing analytics: which tiers handle what share of your traffic

- Agent grouping across workspaces

— The Manifest team