How MyTrainer Uses Manifest Routing to Control AI Inference Costs

MyTrainer is an AI personal trainer mobile app.

The product uses LLMs across several parts of the experience:

- onboarding is a conversational AI flow;

- workout and nutrition plans are generated from the user context;

- the coaching chat can use tools to update workouts and meals;

- complex program changes can run asynchronously in the background.

Before integrating Manifest, most AI requests were sent through a classic OpenAI API-key path. At my current usage, the coaching chat alone was reaching a run-rate of roughly $20/day, or about $600/month.

The goal was not to route every request to the cheapest possible model. The goal was to make routing match the product workload:

- high-volume coaching chat should have predictable cost;

- deterministic workout generation should keep API-level control;

- complex planning should use a stronger reasoning route;

- fallback should remain available when a provider reaches a limit or fails.

Manifest became the routing layer for that.

MyTrainer has different AI workloads

The first important distinction is that MyTrainer is not one LLM call.

Different workflows have different constraints.

| Workload | Example | Main requirement |

|---|---|---|

| Onboarding | Understand goals, lifestyle, constraints, injuries, equipment | Context quality |

| Workout generation | Build a structured training plan | Determinism and schema reliability |

| Nutrition generation | Build nutrition guidance and meals | Consistency |

| Coaching chat | Answer day-to-day questions | Cost, latency, helpfulness |

| Tool-using chat | Modify a session or meal | Tool correctness |

| Complex planning | Replan a week after travel, injury, or missed sessions | Reasoning quality |

Routing all of these through the same endpoint is convenient, but it is not ideal.

A quick coaching answer does not need the same route as a full program replanning task. A deterministic workout generation does not have the same requirements as a conversational message.

This is where routing becomes an application design decision, not just an infrastructure detail.

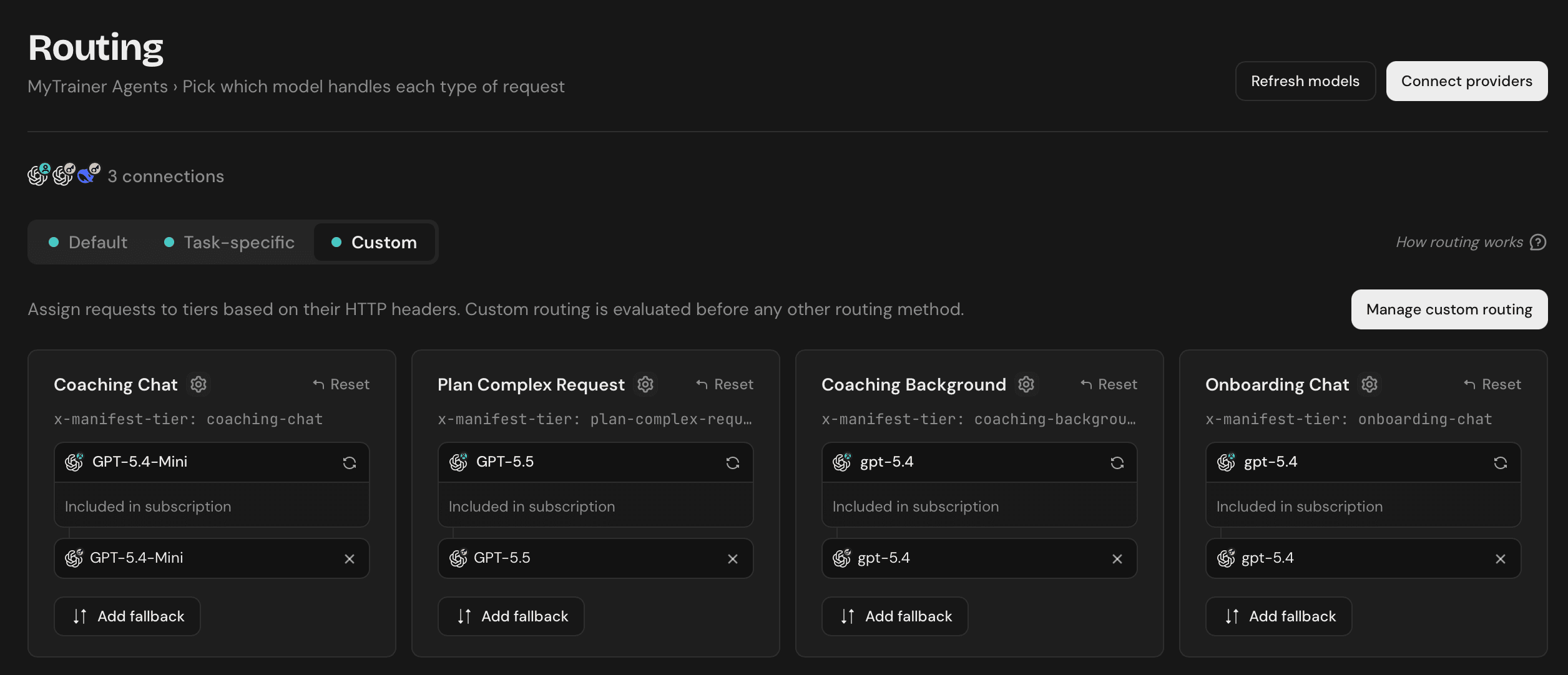

For now, MyTrainer routes four workloads through Manifest: coaching chat, plan_complex_request (the planning decision made from inside the chat), coaching background (the async agent loop that executes the plan), and onboarding chat. Workout and nutrition generation still go through the direct OpenAI API for the reasons covered in the next section. The migration is intentionally staged — I want each workload moved with confidence rather than flipped over all at once.

Moving coaching chat to a subscription-backed route

Manifest sits between MyTrainer and the model providers.

Before:

MyTrainer backend

-> OpenAI API key

-> model response

After:

MyTrainer backend

-> Manifest

-> subscription-backed provider or API-key provider

-> model response

For the coaching chat, I connected a dedicated OpenAI/ChatGPT subscription-backed provider through Manifest.

The OpenAI API key did not disappear. It became the fallback route.

Coaching chat

-> Manifest

-> OpenAI subscription-backed provider

-> OpenAI API key fallback

That changed the cost profile of the highest-volume part of the app.

Previously, every coaching message contributed to variable API usage. After the integration, most coaching chat traffic could go through a predictable subscription-backed route, while the API key remained available if fallback was needed.

At my current usage, the primary path moved from approximately $20/day to $20/month + API fallback when needed.

Subscription endpoints are not always API parity

One important implementation detail is that a subscription-backed OpenAI route is not exactly the same as the public OpenAI API.

It behaves more like a Codex-style subscription endpoint. The request format can be similar, but not every parameter is necessarily supported in the same way.

For MyTrainer, this matters because some workflows depend on specific parameters.

For workout generation, I often set temperature to 0. The output becomes product state: sessions, exercises, sets, repetitions, rest times, weekly distribution, and progression logic. In that context, determinism is important. I do not want the model to be creative if it makes the result less stable.

Coaching chat is different. For day-to-day coaching messages, exact sampling behavior is less important. I care more about usefulness, tool correctness, latency, and cost.

That is an important point: routing is not only about cost. It is also about endpoint compatibility.

A cheaper route that removes a parameter you rely on is not cheaper. It is a hidden reliability risk.

Asynchronous planning with plan_complex_request

The coaching chat can use tools such as:

create_session;modify_session;create_meal;update_meal;plan_complex_request.

Most tools are direct. If a user asks to replace one exercise, the agent can call modify_session and answer immediately.

plan_complex_request is different.

It is used for broader changes:

- “I’m going on holiday next week and I’ll only have dumbbells.”

- “I missed three workouts. Rebuild the rest of the week.”

- “My shoulder hurts. Remove pressing movements for the next two weeks.”

- “I can only train three times this week instead of five.”

These requests affect the structure of the program. They require planning, tool calls, validation, and persistence.

They also do not need to block the chat.

From the user’s perspective, the coach answers immediately:

I’ll adapt your week based on those constraints and notify you when the updated plan is ready.

Behind the scenes:

1. User sends a complex request

2. The chat agent calls `plan_complex_request`

3. MyTrainer creates an asynchronous planning task

4. The chat returns an acknowledgement

5. A background agent loop starts

6. The agent loads the user context:

- current program

- upcoming sessions

- goals

- injuries

- equipment

- schedule

- recent conversation context

7. The agent plans the changes

8. The agent applies them through internal tools

9. MyTrainer persists the updated state

10. The user receives a notification when it is complete

This is not just another chat completion. It is a background agent workflow.

Because it has more ways to fail, I route it differently — and I split it into two distinct tiers.

The chat agent’s plan_complex_request tool call is the planning decision: deciding the new shape of the program based on the user’s constraints. That call uses:

x-manifest-tier: plan-complex-request

The background agent loop that runs after the acknowledgement — loading context, calling internal tools, persisting state — is a different workload. It uses:

x-manifest-tier: coaching-background

Coaching chat sends its own tier header:

x-manifest-tier: coaching-chat

And onboarding, which has its own conversational profile, sends:

x-manifest-tier: onboarding-chat

In my current Manifest configuration, the plan-complex-request tier is mapped to GPT-5.5, while coaching-background, onboarding-chat, and the day-to-day coaching-chat tier all stay on GPT-5.4 / GPT-5.4-Mini through the subscription-backed provider. Using a stronger model for the planning decision is justified: it is the moment that determines whether the new program will be coherent. The execution loop that follows is more mechanical, and a smaller model handles it well.

In my evals, this final Manifest configuration performed better than my previous setup where coaching and planning were mostly handled through GPT-5.4 mini only. The point is not only that the default path became cheaper. It is that the expensive intelligence is now reserved for the workflow where it has the most product impact.

The product knows this is a complex planning operation. Manifest owns the route. The model receives the task through the right inference path.

Routing matrix

The current routing strategy is simple.

| Operation | Model | Fallback | Reason |

|---|---|---|---|

| Standard coaching chat | GPT-5.4-Mini (Manifest, subscription) | GPT-5.4-Mini via OpenAI API key | High volume, predictable cost |

plan_complex_request (planning decision) |

GPT-5.5 (Manifest, subscription) | GPT-5.5 via OpenAI API key | Reasoning quality where it matters most |

| Coaching background agent loop | GPT-5.4 (Manifest, subscription) | GPT-5.4 via OpenAI API key | Async execution of the planned changes |

| Onboarding chat | GPT-5.4 (Manifest, subscription) | GPT-5.4 via OpenAI API key | Conversational onboarding, separated from coaching traffic |

| Workout generation | OpenAI API direct, temperature: 0 |

— | Determinism and structure |

| Nutrition generation | OpenAI API direct | — | Structured output |

The main idea is that each operation has a different failure mode.

A coaching answer can be slightly different and still be useful. A workout program needs structure. A complex planning task needs reasoning quality.

Routing lets the application reflect those differences.

Evaluating routing changes

I use Langfuse evaluations to test important prompt, model, and routing changes.

For MyTrainer, quality is not only whether the answer sounds good. Many AI outputs affect product state.

Examples of evaluation scenarios:

- replacing an exercise while preserving the session objective;

- moving a workout without breaking recovery;

- adapting a week to limited equipment;

- modifying a meal while preserving constraints;

- deciding whether a request should become an async planning task.

For complex planning, I care about the final state of the plan, not just the initial chat response.

This is also where the GPT-5.5 reasoning route performed particularly well. With the current Manifest configuration, before adding the future notification path or Complexity Routing, the eval results for complex planning are better than the previous GPT-5.4 mini-only baseline, while the high-volume coaching traffic can still use a more predictable-cost route.

This is why routing and evals should go together. Cost optimization is only useful if the product quality remains stable.

Results so far

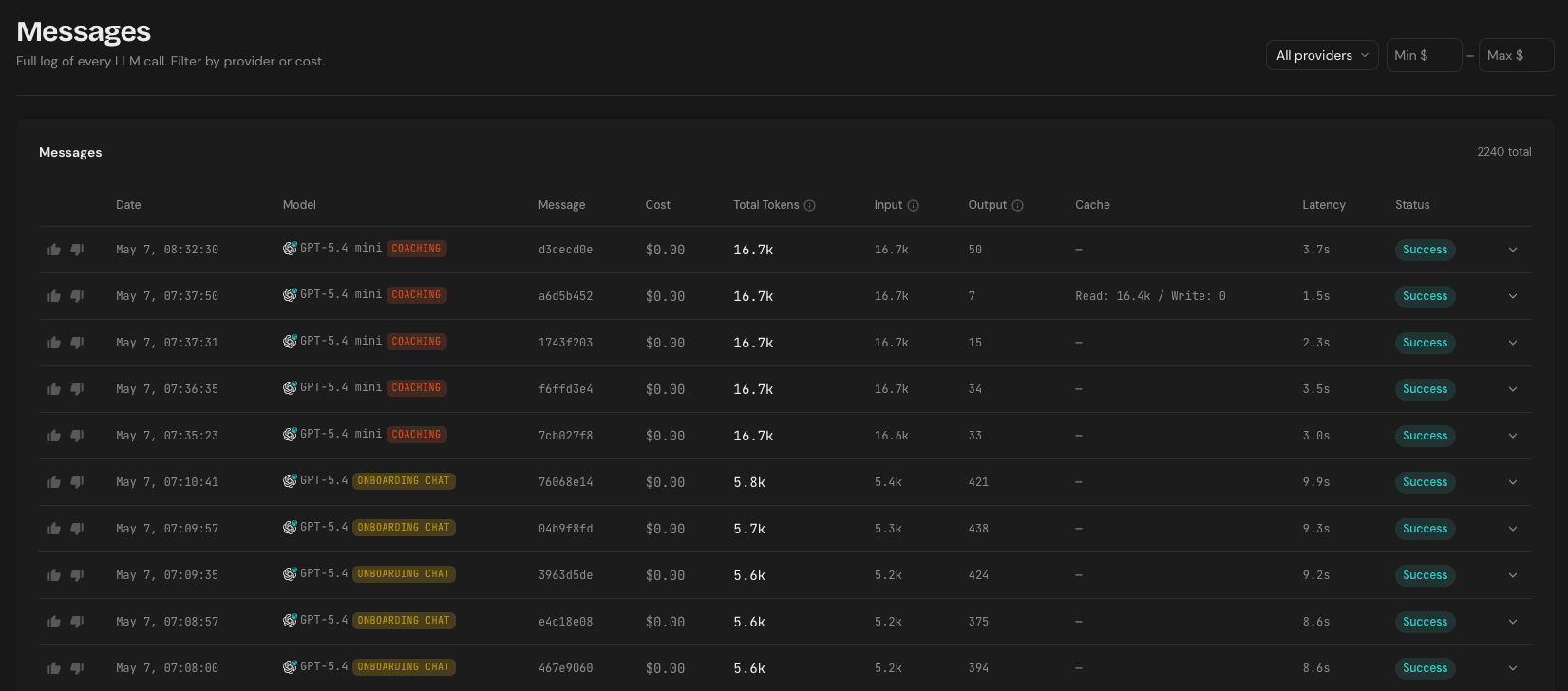

The main result is that MyTrainer now has a more predictable inference architecture. The highest-volume path, coaching chat, moved from a per-token API-key default to a subscription-backed route with API-key fallback.

The Messages log makes this concrete: every routed call — both coaching chat and onboarding chat — is now resolved through the subscription-backed route at $0.00 marginal cost, while still surfacing token counts and latency for observability.

The broader result is architectural: AI work is now routed based on what the product is actually doing, instead of every request going through the same model path. That is a better fit for an AI-native app.

What’s next

Three things I want to explore next.

1. Moving workout and nutrition generation to Manifest

Workout and nutrition generation still go through the direct OpenAI API today because they depend on temperature: 0 and very specific structured-output behavior. The plan is to add a Manifest tier that routes them through the OpenAI API key directly: determinism stays intact, but the workload moves behind the same routing layer as everything else, which makes evals, fallback, and observability consistent across the stack.

2. Moving the notification path to Manifest

MyTrainer notifications are already generated by AI. The model can decide the notification title, content, and timing.

For example, MyTrainer may generate a motivational message before a planned workout. But if the user completes the workout before the notification is sent, the original message becomes stale. In that case, the system should be able to check the latest user state and either cancel the notification or rewrite it into something more relevant, such as a congratulatory message.

A future notification flow could look like this:

AI generates the notification

-> notification is scheduled

-> MyTrainer reloads the latest user state before sending

-> guardrail decides whether it is still relevant

-> notification is sent, rewritten, or cancelled

This is a different workload from the coaching agent. Notifications are shorter, more constrained, and usually do not need the same model as a tool-using agent. I will probably test a dedicated notification route, with a model optimized for concise writing, timing, and context freshness.

3. Exploring Manifest Complexity Routing for coaching

Manifest already proposed Complexity Routing, but I have not integrated it yet.

Today, MyTrainer uses explicit routing for the clearest case: plan_complex_request goes to the reasoning tier because the product already knows it is a complex asynchronous planning task.

The next step is to make routing more granular for coaching requests.

Some coaching messages are simple Q&A. Some require one tool call. Some require multiple tool calls. Some should become asynchronous tasks. Those requests should not necessarily use the same route.

A more advanced routing matrix could look like this:

| Coaching request type | Possible route |

|---|---|

| Simple answer | Default subscription-backed route |

| Single tool call | Standard route |

| Multi-step tool use | Stronger route |

| Program-level planning | Reasoning route |

| Async planning workflow | Reasoning route + background task |

This will probably be a second step after the Manifest migration is complete.

The long-term goal is not to add routing complexity for its own sake. It is to make the AI infrastructure adapt to the work being performed, instead of forcing every request through the same model path.

Conclusion

Integrating Manifest did not require rebuilding MyTrainer’s AI stack.

The main change was moving model selection into a routing layer that supports subscription providers, API-key fallback, and custom routing tiers.

For MyTrainer, this made coaching chat costs much more predictable while keeping control where it matters: deterministic generation, complex asynchronous planning, and reliability.

The broader lesson is simple: AI applications should not treat every request as equivalent.

A quick coaching reply, a structured workout generation, and a background planning workflow all have different requirements.

Routing gives the application a way to reflect those differences.