What is an LLM Router?

An LLM Router is a piece of software that directs prompts to different models. Instead of using always the same model for each request, the router redirects each query to a different model. LLM Routing is used mostly for 3 different purposes:

- Cost saving: Using a cheaper model when handling easy tasks

- Specialization: Use specialist models when needed

- Availability: Using fallback models when one is down or load balancing LLM Routers do the routing automatically so the user experience is smooth and without friction. They differ from LLM Gateways as those ones are more about managing the traffic, ensuring observability and governance rather than choosing the right model for the job.

Rule-based routing vs AI-powered routing

LLM Routers can be grouped into 2 different approaches: rule-based routers which are programmatic and AI-powered routers that use a model to do the redirect.

Rule-based routers, like Manifest, are the simplest to implement: they are fast, deterministic and can run everywhere (client or server). AI-powered routers on the other hand are more powerful and adapt to more use cases, but they cost inference and add extra latency to queries.

Some hybrid approaches are quite effective but they will inherit all AI-powered routers cons (non-deterministic, cost, latency, infra) even if minimized.

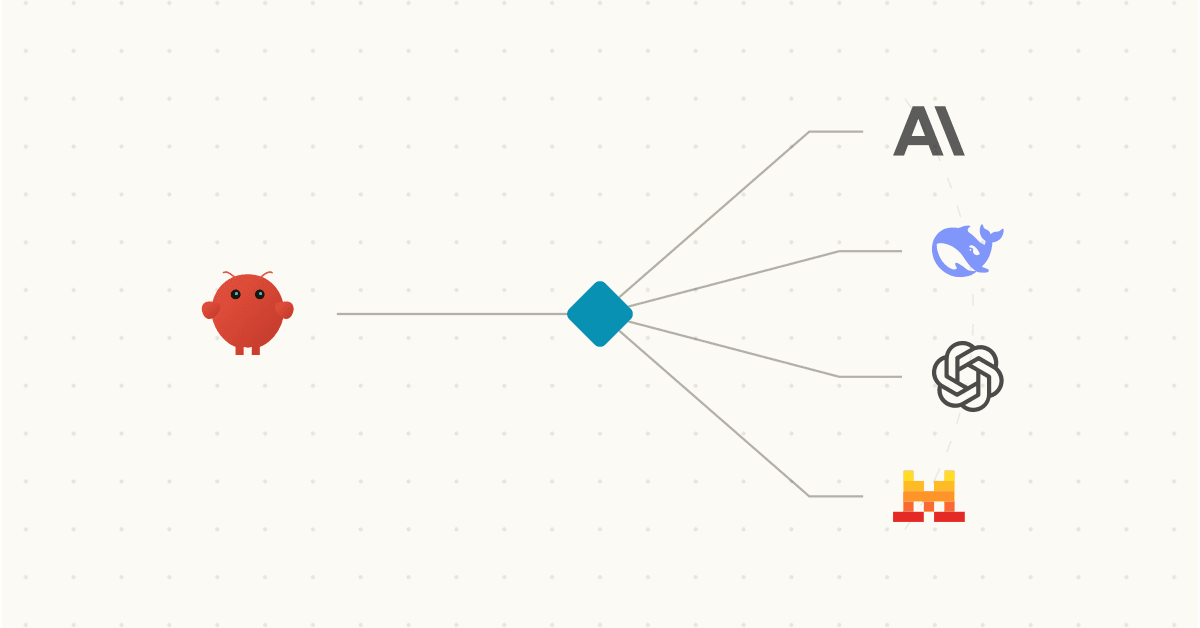

LLM Routing for OpenClaw and autonomous agents

By default, OpenClaw (and other autonomous agents) only enables you to connect to 1 model only. This model will handle all the requests from the simplest to the most demanding, from heartbeats to really complex tasks. It is hard for users to get to a winning trade-off: they either use a top-tier model resulting in extra costs or a cheap model reducing the quality of outputs.

Manifest solves that problem by giving you access to 4 different tiers of complexity where you can add a different model for each. It is a very effective way of using routing to reduce costs and maximize the quality of your OpenClaw. It is open source and has both cloud and 100% local version.